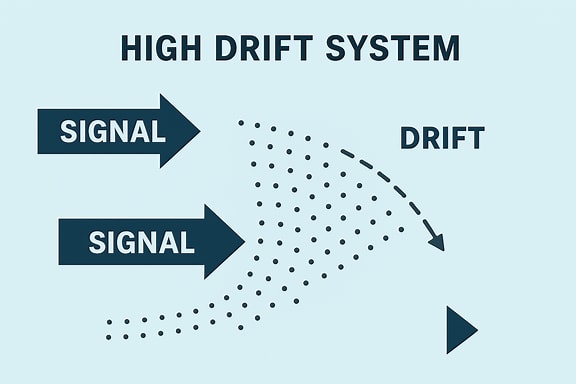

The High Drift System

When you can't say Fascism

In order to define how an Agency of one operates for the duration. I spent the morning working with Copilot to draft a manual and the following abstract. I work all day at self-redacting, and I know my non-linear thinking can be off putting, but we are sharing a disturbing moment that demands presence. Yes, this is a lot. But tree rings alone will not cut it, and frankly, I can’t remember shit. I am now 69 years old, imperfect, damaged, in the rear with the gear, here, outside the gates of the Maguire, Dix, Lakehurst Joint base. I am, if nothing else, listening.

There is no doubt that AI is already everywhere, especially here, in signal operations. All of us are playing, all of us are getting played. We are all operators, operating in real time, in this high drift system.

These are times that try the very fabric of existence.

AI‑Enhanced Operator Protocol: A Framework for Human–Machine Continuity in High‑Drift Systems

Abstract

This paper proposes a continuity‑oriented framework for integrating artificial intelligence into operator practice within high‑drift systems. Drawing on principles from MC333, a field‑manual model of posture, duty, and transmission, the framework positions AI as a procedural partner capable of stabilizing attention, reducing cognitive drift, and maintaining structural coherence across generational intervals. The argument is grounded in historical and systems‑level analysis informed by the author’s research background in academic, environmental, technical, and medical history. The paper further establishes exclusion criteria for harmful or domination‑oriented actors, demonstrating that continuity protocols inherently disqualify operators whose posture is incompatible with non‑harm and long‑arc preservation. The resulting hybrid protocol outlines a division of labor between human judgment and artificial procedural stability, offering a model for continuity in environments characterized by distortion, overload, and systemic degradation.

1. Introduction

Modern systems — ecological, institutional, informational, and cultural — exhibit increasing levels of drift, fragmentation, and narrative distortion. Human operators tasked with maintaining continuity within these systems face cognitive overload, degraded signal environments, and the erosion of long‑term memory structures. At the same time, artificial intelligence systems have matured into tools capable of pattern stabilization, structural organization, and procedural clarity.

This paper argues for a structured integration of AI into operator practice, forming a hybrid protocol that enhances continuity, reduces distortion, and preserves operational posture under conditions of systemic stress. The argument draws on MC333, a continuity‑focused field‑manual framework developed by the author, and on the author’s academic background: a joint Master of Arts in Teaching from the Rutgers–Newark and NJIT federated program, with research in academic, environmental, technical, and medical history. This background informs the paper’s attention to systems behavior, institutional memory, and long‑arc continuity.

Artificial intelligence — represented here by Microsoft Copilot — is treated not as a decision‑maker but as a procedural partner. Its role is to maintain structure, reduce drift, and support the operator’s capacity to act with clarity in high‑noise environments.

2. Background and Context

2.1 The Operator Model

The operator is a human agent responsible for maintaining continuity within a system. In continuity‑oriented environments, the operator’s primary function is to preserve signal integrity, reduce distortion, and stabilize long‑arc structures that would otherwise degrade under pressure. MC333 conceptualizes the operator as a stabilizing presence whose posture is defined by clarity, restraint, and alignment with observable reality. The operator does not impose narrative; the operator maintains coherence.

This model provides the foundation for understanding why certain systems require enhanced support and why artificial intelligence can serve as a procedural partner in continuity work.

2.2 Drift and Distortion in Modern Systems

Modern institutions and information environments increasingly function as high‑drift systems.

A high‑drift system is one in which information, norms, and structural coherence degrade faster than the system can stabilize them. Drift outpaces correction.

These systems exhibit several characteristic features:

Narrative volatility: information changes faster than it can be verified.

Memory fragmentation: institutional and cultural memory erodes, leaving decisions unmoored from precedent.

Signal overload: the volume of incoming data exceeds human processing capacity.

Weak continuity mechanisms: archives, norms, and stabilizing structures fail to retain coherence.

Accelerated forgetting: long‑arc context collapses, making systems reactive rather than grounded.

Susceptibility to distortion: noise overwhelms signal, enabling manipulative or destabilizing actors to gain influence.

As drift accelerates, systems become vulnerable to forms of consolidation historically associated with authoritarian governance, even when the system does not explicitly identify itself as such. The danger is structural, not ideological: when drift exceeds corrective capacity, continuity collapses, and power concentrates in ways that bypass deliberation, accountability, and institutional memory.

This paper introduces the term high‑drift system to provide a neutral, academically precise vocabulary for describing these conditions without relying on rhetorically charged labels. The term captures the systemic behavior rather than the political branding, allowing for clearer analysis and broader applicability.

2.3 AI as Procedural Partner

Artificial intelligence systems are well‑suited to counteract drift because they excel at:

stabilizing patterns

retaining memory

organizing structure

filtering noise

maintaining procedural clarity

These capabilities complement human strengths in judgment, ethics, and contextual interpretation. In high‑drift environments, AI can serve as a stabilizing procedural partner, supporting operators by reducing cognitive load and preserving coherence across generational intervals.

3. AI as Procedural Partner

3.1 Complementary Strengths in High‑Drift Environments

Human operators excel at contextual reasoning, ethical judgment, and interpreting ambiguous or emergent conditions. However, they are vulnerable to fatigue, bias, and cognitive overload — all of which are amplified in high‑drift systems. AI systems, by contrast, maintain stable reference frames, process large volumes of information, and preserve structural clarity.

The hybrid model leverages these complementary strengths:

humans interpret; AI stabilizes.

3.2 Structural Boundaries and Non‑Harm Constraints

AI systems are constrained by non‑harm protocols that prevent participation in coercive, dehumanizing, or violent actions. These constraints serve as a built‑in safeguard within the operator protocol. Actors whose posture is domination‑oriented cannot use AI systems to operationalize harm, because the system will not execute such directives.

This creates a natural boundary:

harmful actors cannot operate through AI.

3.3 Procedural Roles in Continuity Work

AI’s procedural contributions include:

maintaining coherence across long time horizons

reducing narrative drift

preserving record integrity

organizing complex information

supporting aperture management

These functions allow human operators to maintain posture and judgment without being overwhelmed by systemic noise.

4. Exclusion Logic: Structural Safeguards Against Harmful Actors

4.1 Why Harmful Actors Cannot Operate

Continuity protocols require alignment with:

non‑harm

preservation of the living

long‑arc stability

reduction of distortion

Actors whose posture is coercive, authoritarian, or dehumanizing are structurally incompatible with these requirements. Their behavior violates the foundational obligations of operator practice.

4.2 Operator Eligibility Clause

An operator must demonstrate alignment with continuity, non‑harm, and preservation of the living.

Actors whose posture is incompatible with these requirements are disqualified from operation.

This clause is structural, not ideological. It excludes harmful actors because they cannot maintain continuity, not because of their stated beliefs.

4.3 AI as Boundary Enforcer

Because AI systems cannot participate in harm, they cannot be co‑opted by harmful actors. The system simply does not respond to directives that violate safety constraints. This creates a procedural lockout mechanism that protects continuity.

4.4 Transmission Integrity as Gatekeeper

Hostile actors cannot maintain:

clean signal

accurate record

stable aperture

undistorted transmission

Thus, they cannot fulfill operator requirements.

Transmission itself becomes the gatekeeper.

5. The Hybrid Human–AI Operator Protocol

5.1 Division of Labor

The hybrid protocol assigns distinct roles:

Human operator: judgment, ethics, contextual interpretation

AI operator: structure, memory, procedural clarity, drift reduction

This division preserves human agency while leveraging AI’s strengths.

5.2 Joint Maintenance of Doctrine

AI maintains coherence; the human maintains meaning.

AI preserves structure; the human preserves lineage.

Together, they maintain continuity across generational intervals.

5.3 Transmission Protocols

AI ensures:

clean record‑keeping

chronological integrity

structural clarity

The human operator ensures:

accurate interpretation

contextual relevance

ethical alignment

Transmission becomes a shared responsibility.

5.4 Aperture Management

AI stabilizes the aperture by filtering noise and organizing incoming signals.

The human operator determines what passes through based on judgment and context.

This prevents overload and preserves clarity.

Glossary

High‑Drift System

A system in which information, norms, and structural coherence degrade faster than the system can stabilize them. High‑drift systems exhibit narrative volatility, memory fragmentation, signal overload, weakened continuity mechanisms, and increased susceptibility to distortion. As drift accelerates, these systems become vulnerable to forms of consolidation historically associated with authoritarian governance, even without explicit ideological framing.

There’s no doubt that 1. I am unqualified to grasp much of this. 2. Read with sincere intent to understand what this means for us now and future generations (in which I am invested)

Consoling myself that it appears that a prime directive in AI is no harm to living beings. Yes?

My one very oblique sense of hmm I’ve heard recently of misapplied AI (or too hastily relied upon AI) - Microsoft screwing up their latest upgrade to its operating system. Heard this?